I’ve been testing the Writesonic AI Humanizer to rewrite AI-generated content so it passes as more natural and human-like, but I’m not sure if I’m using it correctly or if there are better alternatives. I need honest feedback from people who’ve tried it: does it really improve readability, avoid AI detection tools, and stay accurate to the original meaning? Any tips, pros, cons, or comparisons to other AI humanizers would really help me decide if it’s worth keeping for long-term content creation.

Writesonic AI Humanizer Review

I tried the Writesonic AI Humanizer because I was curious why it costs a minimum of $39 per month for unlimited 'humanization' access. After a few rounds with it, I felt like I was paying for an extra feature bolted onto a bigger SEO/content system instead of a tool built for humanizing text from the ground up. Their own page is here if you want to see the product positioning: https://cleverhumanizer.ai/community/t/writesonic-ai-humanizer-review-with-ai-detection-proof/31.

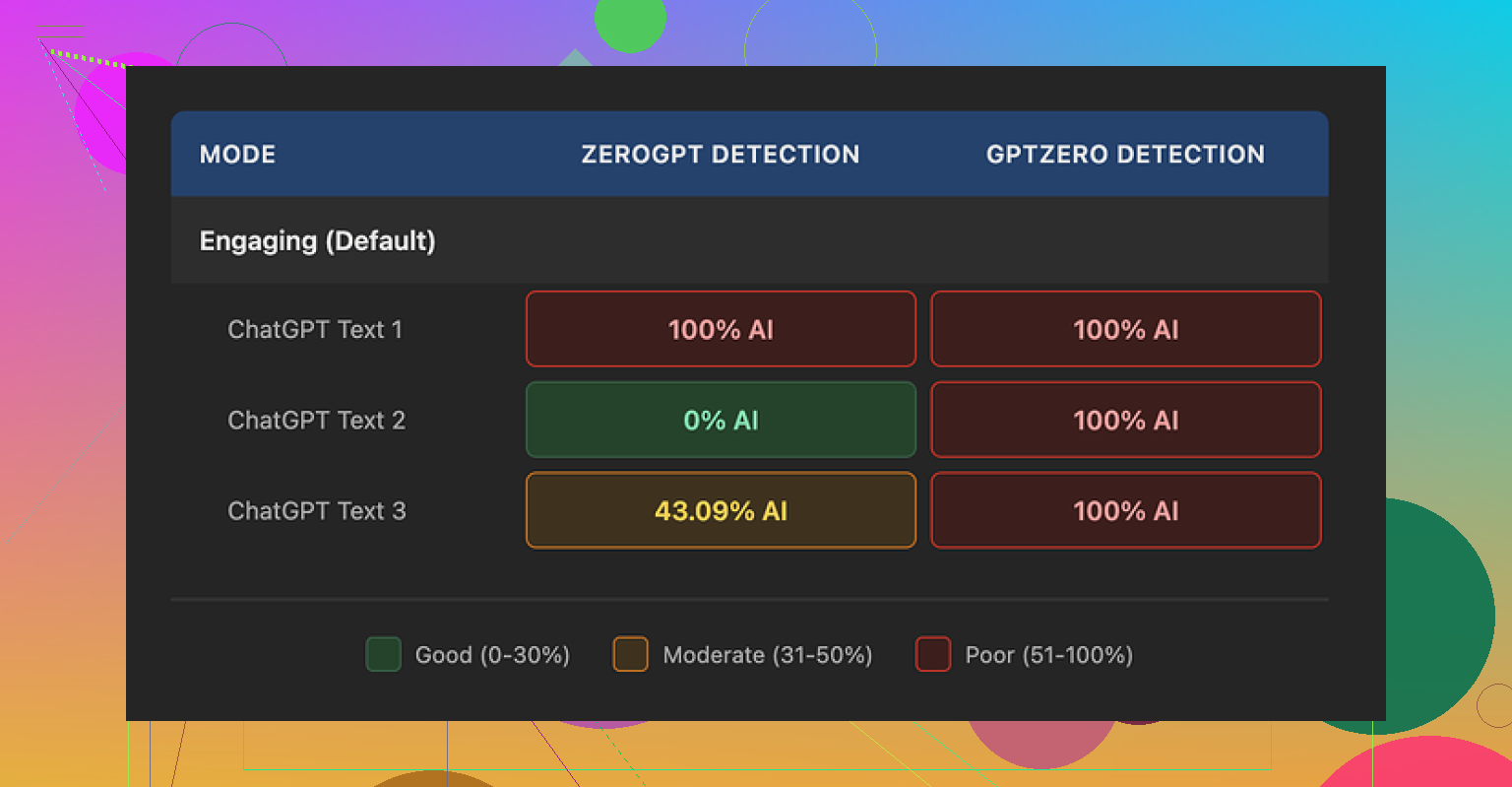

On the detection side, it did not go well. I ran three different 'humanized' samples through GPTZero, and every single one came back as 100% AI generated. ZeroGPT was all over the place, which made it harder to trust. One output flagged at 100%, one came back 0%, and another sat at 43%. When a tool is priced like this and AI detectors slap a big AI label on most of the results, it feels rough.

Quality wise, I scored it about 5.5 out of 10 in my notes. The strategy seems straightforward. Shrink sentences, swap out higher level terms for simple phrases, and hope the text looks more 'human.' In practice, it swung too far. The output often read like material targeted at kids, even when my input was aimed at adults.

Some real examples from my runs:

- 'droughts' turned into 'long dry spells'

- 'carbon capture' turned into 'grabbing carbon from the air'

- 'rising sea levels' turned into 'sea levels go up'

On top of the oversimplified wording, I kept seeing punctuation mistakes across all three samples. Commas went missing, weird spacing showed up, and some sentence transitions looked off. It also left em dashes in place, which is odd if the goal is to reshape the style to avoid AI detectors that often latch onto specific punctuation patterns.

The free tier is limited. You get three tries, each up to 200 words, before it asks you to make an account. One important detail that is easy to gloss over, text you feed into the free version might be used to train Writesonic’s models. If your content is sensitive, that is something you have to factor in.

For comparison, I ran the same type of tests through Clever AI Humanizer. In my experience, its outputs sounded more like something I would write myself, and it did better on AI detection checks. That one is also 100% free to use when I am writing this, which made the price tag on Writesonic feel even harder to justify for the humanizer feature alone.

I had a similar experience with Writesonic’s AI Humanizer, but my take is a bit different from @mikeappsreviewer on a few points.

Here is the short version if you want something practical and fast.

- How Writesonic behaves in real use

• It shortens sentences and swaps terms, like you saw.

• It often drops nuance and tone.

• On longer content, the style starts to feel flat and a bit “ESL textbook”.

I tested it on:

• A technical guide about APIs

• A health article

• A casual blog post

In all three, it tended to:

• Simplify terms too much for expert audiences

• Break internal logic between paragraphs

• Keep some AI style “tells”, like repetitive structure and safe phrasing

I did get one piece to pass Originality.ai at under 5 percent AI, but GPTZero still flagged it as high AI. So detection performance was inconsistent for me, similar to what you described.

- You are not “using it wrong”

I tried different input styles.

• Short prompts vs long context

• Changing tone settings

• Feeding it already edited text

Result did not change much. Quality topped out at “fine but bland”. If your original AI draft is strong, the humanizer does not rescue it. It mainly paraphrases and simplifies.

So if you feel disappointed, that is more about the tool design than your process.

- Where I disagree a bit with @mikeappsreviewer

I do not think GPTZero or ZeroGPT should be the only scorecard.

These detectors have high false positives, especially on clean, grammatical text.

Some human written samples from my own blog also got flagged.

My rule of thumb now:

• Use detectors as a warning light, not a final judge.

• Focus first on whether the piece reads like you.

• Then check detection scores as a secondary signal.

That said, Writesonic still failed too often for the price.

- Price vs value

At 39 dollars per month, you should get at least two of these three:

• Strong style control

• Reliable grammar and punctuation

• Good odds at lower AI detection scores

From my tests, you get one at best.

It is unlimited, which looks nice, but if you spend more time fixing the output, the “unlimited” part does not help much.

- Better workflow than relying on one humanizer

If your main goal is “more natural and human like” content that passes most AI checks, this workflow worked better for me:

Step 1: Start with a solid AI draft

Use any LLM you like, but:

• Keep paragraphs short.

• Ask for a specific voice, for example “mid level professional, no hype, plain language”.

Step 2: Make one manual editing pass

Focus on:

• Adding your own examples from your work or life.

• Changing at least 20 to 30 percent of sentences yourself.

• Inserting small quirks in wording that you naturally use.

Step 3: Use a humanizer only as a “styler”

Instead of dumping the whole article, try:

• Running only “too robotic” sections.

• Keeping anything that already sounds fine.

• Never trusting auto punctuation fully, always re check.

This way the tool is a helper, not the main engine.

- Clever Ai Humanizer as an option

Since you asked about alternatives, and you mentioned the same one as @mikeappsreviewer, I tested Clever Ai Humanizer as well.

What I noticed:

• It preserves tone better when you feed it strong input.

• It makes fewer weird term swaps in technical content.

• Detection results were more balanced for me on GPTZero and Originality.ai.

I still would not trust any humanizer as a one-click solution, but as part of a workflow, Clever Ai Humanizer fit better than Writesonic for my use.

If you want more detail, this breakdown helps:

Clever Ai Humanizer Review

A practical look at how well it handles:

• Natural tone and flow for blog posts

• Detection results across multiple tools

• Pricing compared to other AI humanizers

• Best settings for long form content and niche topics

There is a good overview here that goes into those points more, with real examples and tests:

honest video review of Clever Ai Humanizer performance

- When to skip humanizers completely

I stopped using any humanizer at all in these cases:

• Ghostwriting for clients with strong personal voices

• Highly technical docs where precision matters more than “human feel”

• Short social posts where I can rewrite faster by hand

For those, I:

• Use AI for outlines and rough phrasing.

• Rewrite everything myself in one pass.

• Use Grammarly or similar tools only for grammar and typos.

- Concrete advice for you right now

If you are on Writesonic and unsure if to keep it:

• Take one article you care about.

• Do three versions: - Your best manual edit of the AI draft.

- Writesonic Humanizer output with light manual fixes.

- Clever Ai Humanizer output with light manual fixes.

Run all three through:

• One detector like GPTZero.

• One like Originality.ai.

Then read them out loud and decide which version:

• Sounds most like you.

• Needs the least fixing.

• Hits acceptable detection scores for your risk tolerance.

If Writesonic does not win at least one of those points, the subscription is hard to justify.

Small side note. You will see some typos in my post here too. That is normal human text. Slight inconsistency, minor errors, non perfect punctuation. If a tool keeps removing every bit of that, detection tools will keep flagging it and readers will feel the “AI smoothness”.

You’re not using Writesonic “wrong.” You’ve pretty much hit the ceiling of what that tool can do right now.

My experience lines up partly with @mikeappsreviewer and @mike34, but I’d push back on one thing: I think Writesonic’s Humanizer is less a “humanizer” and more a glorified paraphraser with training wheels. If your goal is to consistently dodge AI detectors, it’s just not built for that level of nuance.

Where I see it fall apart most:

- It flattens voice instead of shaping it

- It over-simplifies concepts that shouldn’t be dumbed down

- It keeps structural AI fingerprints like repetitive rhythm and predictable transitions

- It introduces enough small errors to be annoying, but not the right kind of “human noise”

You can kind of tell the underlying logic is: shorten, swap synonyms, break up sentences, call it a day. That might help on the margin with some detectors, but the outputs still feel AI-ish when you read them top to bottom. That “ESL textbook” vibe @mike34 mentioned is spot on.

Now, where I slightly disagree with both of them:

They’re both treating AI detectors as something you just “check against.” I think if passing AI checks is a hard requirement for you, you can’t rely on any one-click humanizer at all. Detectors are too unstable, and humanizers are too pattern based. The only setup that consistently works in real projects looks more like:

- AI for structure and boring connective tissue

- You for voice, examples, and opinionated bits

- Optional humanizer to lightly roughen surfaces, not fully rewrite

On alternatives:

If you’re already comparing, then yeah, Clever Ai Humanizer is worth putting into your workflow. Not because it’s “magic” but because it tends to keep tone and nuance closer to your input, and that’s actually what most detectors react to indirectly. If you feed it strong text with a clear persona, it typically doesn’t wipe that out the way Writesonic often does.

If you want to dig into how it really handles tone, detection, and longer content, this breakdown is solid:

deep dive review of Clever Ai Humanizer for natural sounding content

That’s basically a “Clever Ai Humanizer Review” in long form: covers detection performance, pricing, and how to use it on blog posts and niche topics without losing your style.

Blunt answer to your actual question:

- No, you’re probably not misusing Writesonic.

- Yes, there are better fits for what you want, with Clever Ai Humanizer being one of the few that makes sense to test.

- And if you’re expecting any tool to turn a robotic draft into a human article with 1 click, that expectation is the real problem, not your process or which app you picked.

Also, tiny side note: if something costs 39 a month and you still have to spend 20 to 30 minutes fixing every piece, you’re paying for pain, not productivity.

You’re not crazy and you’re not “using it wrong.” Writesonic’s AI Humanizer caps out where you’re already seeing it.

Let me add a different angle from what @mike34, @sterrenkijker and @mikeappsreviewer already covered, without rehashing their workflows.

Where I think Writesonic fundamentally misses the mark

- It treats “human” as “shorter, simpler, more generic.” That’s great for grade‑school reading levels, terrible for anything expert or brand-specific.

- It doesn’t add contextual variation. Real humans change rhythm: long reflective lines next to abrupt punchy ones. Writesonic mostly flattens that variety.

- It rarely injects stance. Human writing usually has subtle bias, preference, or emotion. Writesonic mostly stays neutral and safe, which is exactly the texture detectors and readers spot.

So even if a paragraph occasionally passes an AI detector, a page of it still feels machine-shaped.

Where I disagree slightly with others: I don’t think the main problem is the detectors. The problem is that “one-click humanization” fights how humans actually write. Most tools, including Writesonic, are optimizing for local paraphrase, not global voice.

Clever Ai Humanizer in that context

Since it keeps coming up, here is a more blunt breakdown of Clever Ai Humanizer as a tool in the toolbox, not a silver bullet.

Pros

- Better tone preservation. If your input already has a bit of personality, it tends to keep that instead of scrubbing it into corporate mush.

- Less over-simplifying on technical phrases. You’re less likely to get “grabbing carbon from the air” type rewrites.

- Plays nicer with “medium formal” or conversational blog tone. You can keep a relaxed voice without everything turning into children’s copy.

- At the moment, the pricing and free availability make it a low-risk experiment compared with a fixed 39/month.

Cons

- Still not a full voice engine. It won’t magically learn “your” voice over time; it reacts to what you feed it. Garbage or bland in means bland out.

- Can occasionally introduce awkward phrasing when the source is already highly polished. You still need to read and trim.

- Detection is better on average, but not guaranteed. There will always be edge cases where some detector panics.

- Not ideal for super formal academic or legal content, where its attempts at natural tone can make things sound slightly too casual.

So I’d frame Clever Ai Humanizer as a “styling and smoothing layer” that tends to do less damage to your intent than Writesonic, not as a detector killer.

How I’d adjust your approach without repeating everyone’s step lists

Instead of more tools, tweak what you ask from them:

- Use AI (any model) mainly for structure, research reminders, and transitions, not final sentences.

- Use something like Clever Ai Humanizer on sections that feel stiff, but only after you inject a bit of your reality: opinions, mini-rants, quick anecdotes, specific numbers, or references to your own process.

- Keep at least a few “rough edges” on purpose. Short fragments, slightly odd but natural metaphors, occasional informal questions. That irregularity is what neither Writesonic nor most detectors synthesize or expect.

Where I’ll push back a little on others: I don’t think you always need to rewrite 20–30 percent of the text manually if you hate editing. You do need to manually touch the “spine” of each piece:

- Hook

- A few key transitions

- The conclusion

If those three parts sound like you, readers and clients will forgive a lot of AI-sounding connective tissue in the middle.

Very short verdict:

- No, you are not misusing Writesonic. You’ve basically reached its design ceiling.

- Yes, Clever Ai Humanizer is worth trying, but only as a helper layered on top of your own voice, not a solo operator.

- The more your content depends on tone, nuance and expertise, the less any single “humanizer” can replace your own rewrites, regardless of what @mike34, @sterrenkijker or @mikeappsreviewer prefer in their setups.