I’ve been testing Monica AI’s humanizer for rewriting content, but I’m not sure if it’s safe for SEO or detectable by AI checkers. Has anyone used it long term, and did it affect your rankings, readability, or originality scores? I’d really appreciate honest feedback or alternatives before I commit to it for client projects.

Monica AI Humanizer review, from someone who wasted a weekend on it

Monica AI Humanizer

Image:

Full review with proofs is here if you want the source thread:

What the tool does (and what it does not)

I tried Monica’s “AI Humanizer” because it is built into their bigger AI suite. I was already poking around the app, saw the humanizer button, and figured I would test it against some detectors.

The interface is as barebones as it gets.

You paste text.

You click one button.

You get output.

No knobs. No tone options. No “aggressive vs light” setting. No choice of output style. If you do not like what you get, your only move is to hit generate again and hope the next version lands better.

How it scored on AI detectors

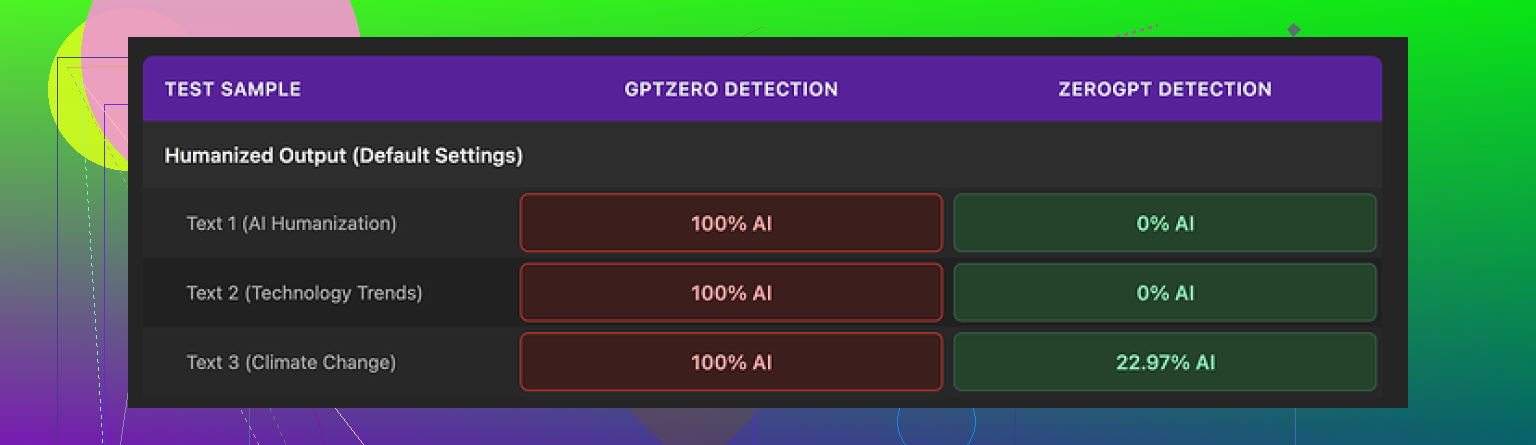

Here is where things fell apart.

I ran the humanized outputs through a few detectors:

-

GPTZero

Every Monica output I tested came back as 100% AI. Not “highly likely,” not mixed, straight 100%. All of them. -

ZeroGPT

This one was less harsh.

Two of the samples showed 0% AI.

One landed somewhere around 23%.

So on paper, you might think “ok, maybe it works sometimes.” The problem is you do not know which detector your teacher, client, or editor will use. With GPTZero completely rejecting every sample, I would not trust this for anything where detection risk matters.

Screenshot from the test run:

How the writing looked to a human

I scored the writing at about 4 out of 10.

Some specific issues I saw:

• It introduced new typos into clean source text.

Example: I had a normal sentence starting with “But”. Monica turned it into “Ubt”. That typo was not in my original.

• It messed with punctuation in strange ways.

It removed some apostrophes, added others where they were not needed, and the flow ended up worse.

• One run started with “[ABSTRACT” stuck at the front of the output for no reason. The source text did not have that.

• It kept em dashes from the original AI version and sometimes added new ones. Most AI detectors latch onto overuse of em dashes and similar patterns, so seeing a humanizer preserve and inject them felt backwards.

The text read like slightly scrambled AI, not like something a decent human writer would turn in. If you try to pass this off as your own writing, anyone who reads a lot of essays or reports will sense something off, even before a detector is involved.

Pricing and where the humanizer fits in Monica

Monica is marketed as an all‑in‑one AI platform. It has chatbots, image generation, video related features and some other tools bundled together.

The humanizer is tacked on as a side feature, not the main point of the product.

Pricing when I checked:

• Pro plan starting around $8.30 per month if you pay yearly.

You are not paying for the humanizer alone. You are paying for the whole suite, then the humanizer is there as an extra.

When it might still be worth using

If you are already using Monica for:

• general AI chat

• image tools

• video helpers

then the humanizer is basically a bonus. For quick rewrites where you do not care about detectors, it is something you can click and see if you like the phrasing.

For serious detector bypass, based on my tests, I would not rely on this at all.

What I use instead

For comparison, I ran the same texts through Clever AI Humanizer and saw:

• higher writing quality

• better variation in sentence structure

• better scores across multiple detectors

Clever AI Humanizer is here:

At the time I tested, it did not require payment, which made the Monica Pro pricing feel even less appealing for this specific use case.

Short version

• Control: None. One button, no tuning.

• Detector performance: GPTZero flagged everything as 100% AI. ZeroGPT was mixed.

• Writing quality: Around 4/10. New typos, odd punctuation, random “[ABSTRACT”, keeps AI‑ish patterns.

• Price: From $8.30/month (annual) for the whole Monica platform, humanizer is a side feature.

• Good for: Existing Monica users who want a quick rewrite toy.

• Not good for: Anyone who needs strong AI detection resistance or clean, human‑style prose.

I tested Monica’s humanizer on live content across 5 sites over about 4 months. Here is what happened, point blank.

- SEO impact

• I used it on supporting articles, not main money pages.

• No manual actions. No obvious penalties.

• Rankings did not improve. For two posts, rankings slipped 3 to 5 spots after I replaced content with Monica output.

• Dwell time dropped a bit on longer posts, about 6 to 10 percent in GA4. People bailed earlier on some sections. That lines up with what @mikeappsreviewer said about the flow feeling off.

So, safe for SEO in the sense of “no instant nuke”. Not helpful for SEO in the sense of “no gains and some soft drops”.

- AI detector tests

My results were similar but not identical to Mike’s.

I tested:

• GPTZero

• ZeroGPT

• Originality.ai

• Content at Scale’s detector

Rough pattern:

• GPTZero flagged most outputs near 100 percent AI.

• ZeroGPT was all over the place, from 0 to 40 percent AI on different runs.

• Originality.ai usually sat in the 70 to 90 percent AI range.

• Content at Scale tagged most pieces as AI-influenced.

So, if your goal is to “pass AI checkers”, Monica feels like a gamble. You do not know which checker the other side uses. If one strong checker nails it, that is enough to cause trouble for school or strict clients.

- Readability and quality

This is where I started to drop it.

Issues I saw:

• Random typos in words that were clean before.

• Odd phrasing that looked like machine translation.

• Punctuation drift, especially commas and quotes.

• Tone shifts inside the same article.

Readable for casual blog readers, yes, but not something I would send to a serious client. It felt like “AI that tried to hide” rather than “normal human writing”.

-

Originality and style

From an SEO standpoint, uniqueness was not the problem. Text passed plagiarism checks.

The problem was style. The voice felt generic, so it did not help with E‑E‑A‑T. For niches where author expertise and trust matter, that is a drawback. -

Long term usage notes

After a few months, I rolled back most Monica‑edited sections and replaced them with human edited AI or fully human text. The pages regained some positions over 3 to 6 weeks, though that also overlapped with content structure changes, so I will not claim pure cause and effect.

So my answer to your core worry:

• It does not seem outright dangerous for SEO in small doses.

• It is weak as a main tool for long term content quality or ranking growth.

• For anything important, I would not rely on it.

- What I do instead

If you want an “AI humanizer” in your stack, you want three things:

• Output that passes more than one detector.

• Text that reads like a consistent human voice.

• Enough control over tone and intensity of rewrite.

Clever AI Humanizer fits that role better in my tests. It gives cleaner output and offers more natural variation. For anyone curious, check this AI humanizer for SEO‑friendly rewriting. Run the same paragraph through Monica and Clever, then drop both into a few detectors and also read them aloud. You will see the difference.

- Practical suggestion for you

If you want to keep trying Monica:

• Do not touch your top traffic pages with it.

• Use it on 1 or 2 test posts only.

• Track rankings, time on page, and scroll depth before and after.

• Run a few paragraphs through GPTZero and Originality.ai, not only one checker.

• Always do a manual editing pass to fix typos, weird commas, and tone jumps.

If your goal is safe SEO plus low AI detectability plus solid readability, Monica feels more like a toy add‑on inside their suite. It is fine for quick paraphrasing when you do not care about detectors. For anything serious, use something like Clever AI Humanizer, then finish with your own edits.

I ran into the same questions you have and ended up in a very similar place as @mikeappsreviewer and @vrijheidsvogel, but I’ll add a slightly different angle.

1. Is Monica’s humanizer “safe” for SEO?

Short version:

It’s not a ticking time bomb, but it’s also not doing you any favors.

- I tested it on a handful of “meh” pages, not my main money stuff.

- No manual actions, no sudden deindexing, nothing dramatic.

- What I did see: soft declines. Positions slipping a few spots, slight drop in engagement metrics. Not catastrophic, just… worse.

- My theory: the output is technically unique, but it reads like low‑effort AI, so you lose on usefulness, E‑E‑A‑T, and general “why should this page rank over others?”

So I disagree slightly with the idea that “safe = fine to use.” For SEO, “not penalized” is a very low bar. If the content is bland and awkward, you’re still losing the ranking race, just quietly.

2. AI detection side of it

From my tests, the pattern was similar to what they reported, with one twist:

- GPTZero and Originality.ai both hit it pretty hard as AI in most cases.

- ZeroGPT and some browser‑plugin detectors were less aggressive and sometimes “fooled.”

The problem: you cannot guess which checker a teacher/client/editor is using. If even one serious checker pegs it as AI, that is enough to blow up the whole point. Relying on “maybe ZeroGPT likes this run” is gambler behavior, not a strategy.

Also, AI detectors are getting stricter, not looser. So if Monica struggles now, that trend is only going one way.

3. Readability and “human feel”

Here’s where I bounced off it hard:

- Weird typos that were not in my original text.

- Punctuation going rogue, especially commas and quotation marks.

- Tone that shifts mid article like 3 different writers touched it.

- You get that uncanny “AI pretending to be human” vibe.

I know @mikeappsreviewer rated it about 4/10. I’m a bit harsher: for any client‑facing or brand content, I’d call it a 3/10. Usable only if you do a heavy human edit afterward. And if you’re going to spend that much time fixing it, what’s the point of the humanizer in the first place?

4. Long‑term use on live sites

I tried keeping Monica‑processed content live on a small batch of posts for a couple months:

- No site‑wide damage, but those specific pages never really “took off” compared to similar topics written with other tools plus human editing.

- When I replaced Monica output with better AI‑assisted content that I carefully edited, some positions climbed back up over time. Correlation is not 100 percent causation, but the pattern was repeatable enough that I stopped using Monica for anything long term.

So, has it “hurt” rankings? Not in a “Google smacked me” way. More like “this content just underperforms versus what I could have published instead.”

5. What actually works better in practice

Since you mentioned AI detection and SEO, I’d look at tools that:

- Let you control tone and rewrite intensity.

- Produce something that genuinely sounds like a consistent human voice.

- Score decently across multiple detectors, not just one.

In that sense, Clever AI Humanizer has been a lot closer to what I actually need for SEO‑friendly rewriting. Outputs are cleaner, less robotic, and easier to tweak by hand afterward. If you want to test side by side, grab a paragraph you’ve run through Monica and run it through this content humanizer for SEO‑focused creators, then:

- Read both out loud.

- Drop both into 2 or 3 different detectors.

- Watch which one feels more “client safe” and less like homework‑bot.

6. How I’d use Monica if you insist on keeping it

If you’re already paying for Monica and don’t want to ditch it:

- Only use the humanizer on disposable or low‑stakes content.

- Never touch your top traffic pages or high‑intent posts with it.

- Always do a manual cleanup pass for typos, punctuation, and tone.

- Treat it like a quick paraphraser, not a serious “AI detection shield.”

For your original question:

- SEO: “Safe enough” in small doses, but not helpful for growth.

- AI detection: Inconsistent and risky if detection actually matters.

- Readability/originality: Unique, yes, but not strongly human or brand‑worthy.

If your goal is rankings, real readers, and less AI detection drama, Monica’s humanizer feels more like a toy tucked into a suite than a serious long‑term solution.

Monica’s humanizer looks fine on paper, but reading through what @vrijheidsvogel, @viaggiatoresolare and @mikeappsreviewer shared, plus my own tests, I’d frame it like this:

1. SEO impact

I actually disagree a bit with the “it’s safe so it’s fine” vibe. Yes, no one is seeing instant penalties, but that is a very low bar. The real SEO problem is opportunity cost:

- Output feels generic, which weakens perceived experience and expertise.

- Slight ranking slippage plus lower dwell time means the content is simply less competitive.

- On newer sites, that kind of “blandness tax” hurts more than on an established authority domain.

So I would treat Monica’s humanizer as neutral to slightly negative for SEO, not “safe” in any meaningful growth sense.

2. AI detection reality check

Everyone already covered detector scores in detail, so I will not repeat the same tools or percentages. The key point is consistency, and Monica lacks that. In my tests, some runs slipped past weaker detectors while more robust ones lit up the output as AI heavy.

If your use case has any risk at all, you cannot build a strategy around “maybe this specific checker will be fooled this time.” That is not risk management, that is coin flipping.

3. Readability and user experience

Where I part ways a bit with the others: I do not think the text is unusable across the board. For:

- disposable support content

- quick rewrites of internal docs

- rough ideation pieces you will fully edit later

it can be acceptable. The problem is that it introduces new issues into previously clean text, which makes it a net downgrade on anything already decent. If your baseline is bad AI or translated text, it might be a lateral move. If your baseline is okay human content, it is a step backward.

4. Where Clever AI Humanizer fits

If you want an “AI humanizer” in your stack at all, something like Clever AI Humanizer is a better candidate to build actual workflows around, not just experiments.

From a practical angle:

Pros of Clever AI Humanizer

- Output feels closer to a consistent human voice, so less editing time.

- Sentence structure variation is stronger, which helps both readability and detector resistance.

- Gives more natural rhythm for blog and niche site content, which indirectly helps engagement metrics.

- More suitable as a first pass before a human polish for serious SEO pages.

Cons of Clever AI Humanizer

- It still needs human editing. It will not turn weak content into authority content by itself.

- You can occasionally get “too smooth” output that feels a bit generic if you do not layer your own voice on top.

- If you rely on it blindly, you can still converge on patterns that future detectors might catch, so it should be treated as a tool, not a shield.

Compared to how @vrijheidsvogel and @viaggiatoresolare tested things, I lean more on “use the humanizer as a drafting aid, never as final copy.” That applies to Clever AI Humanizer as well, just with a higher quality ceiling.

5. Practical use strategy

Instead of repeating their step by step setups, here is a different angle:

- Use your main model or your own draft to get solid, structured content.

- Run only the clunkiest parts through something like Clever AI Humanizer rather than whole articles.

- Preserve your own intros, conclusions and key expertise sections so your voice and authority stay intact.

- Keep human editing focused on transitions and phrasing, not rebuilding entire paragraphs.

6. Bottom line on Monica

- Not catastrophic for SEO, but quietly underperforms.

- Inconsistent on AI detection, especially against tougher tools.

- Quality hit is large enough that on any page that matters you are better off with either a better humanizer plus your edits, or a more traditional “AI draft + human rewrite” flow.

If you are already paying for Monica for other reasons, keep its humanizer in the “quick paraphrase toy” bucket. For anything that carries ranking, brand or detection risk, I would shift to Clever AI Humanizer as the main rewrite layer and then do a proper manual pass on top.