I’ve been testing GPTinf Humanizer to make my AI-written content look more natural, but I’m not sure if it’s actually helping with detection tools or hurting my SEO. Has anyone used it long-term for blog posts or web copy, and did you see any impact on rankings, traffic, or content quality? I’d really appreciate real-world experiences or tips before I rely on it for important projects.

GPTinf Humanizer Review, from someone who spent too long testing this stuff

Quick take

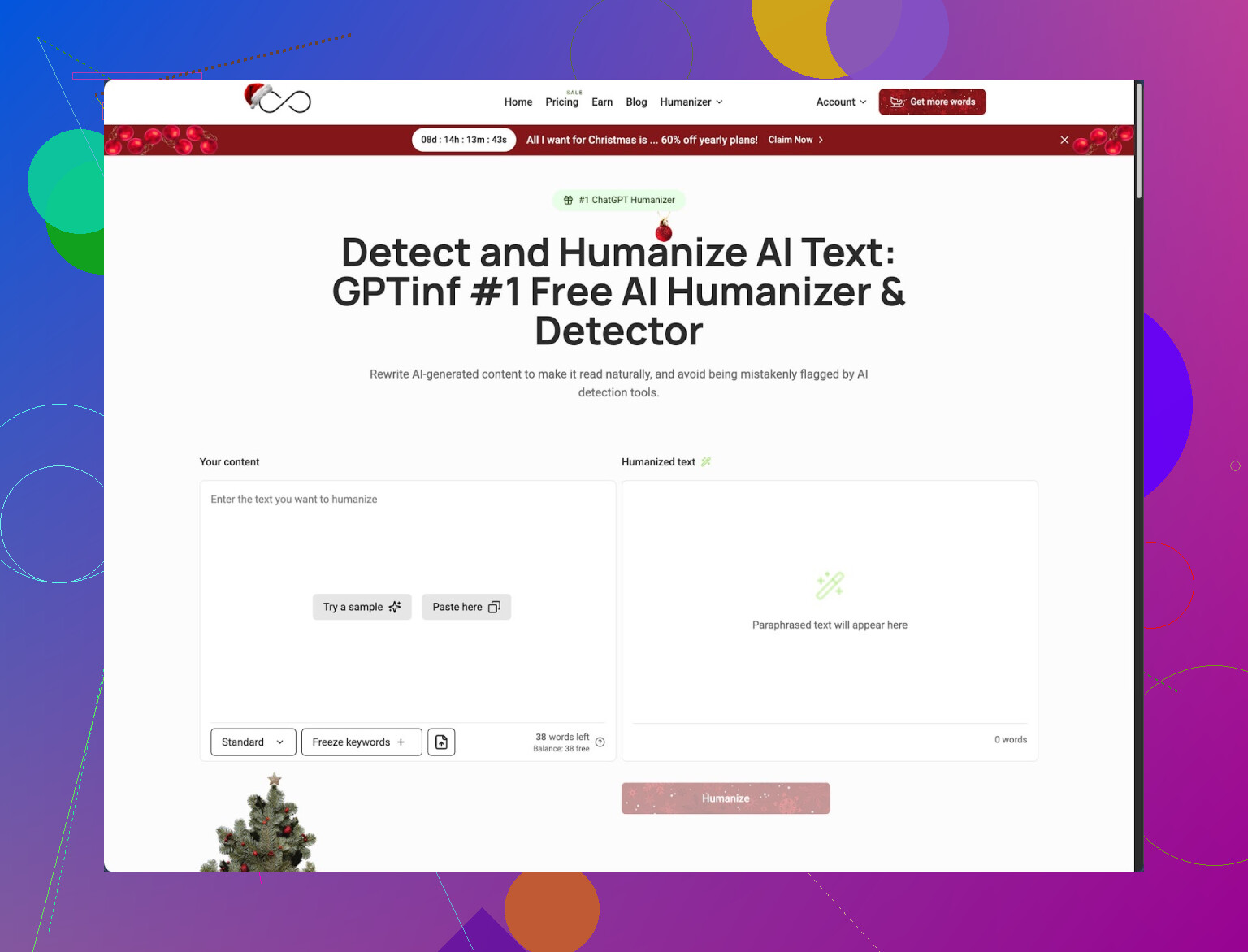

I tried GPTinf because the homepage yells about a “99% Success rate” and I wanted to see if it holds up against the usual AI detectors.

For my tests, it did not. At all.

I fed the outputs into GPTZero and ZeroGPT. Every single “humanized” text came back as 100% AI generated, no matter which mode or option I picked.

So for my use, their advertised number did not match the results.

What worked, what failed

I ran a bunch of prompts through GPTinf, the kind of stuff people usually throw at ChatGPT:

• general explanations

• blog-style paragraphs

• short product descriptions

Here is what I noticed.

- Writing quality

The text itself reads fine. I would call it a 7 out of 10 in terms of clarity and flow.

It looks slightly smoother than raw ChatGPT output. Fewer awkward transitions, fewer stock phrases. If all you want is cleaner phrasing, it works for that.

- Em dash removal

Oddly specific, but worth noting.

GPTinf was one of the few tools I tried that consistently removed em dashes from the output. If you hate them or need to avoid them for formatting reasons, this tool nails that.

So it handles surface-level edits. The structure and deeper patterns still feel like AI though.

- Deep AI pattern problem

The big issue is that detectors do not look at punctuation only. They look at rhythm, token patterns, and phrasing habits you see in LLMs.

When I compared GPTinf outputs to stock ChatGPT ones side by side, the “voice” felt surprisingly close. Same kind of sentence length, same safe tone, same habit of over-explaining.

My guess, based on what I saw, is:

• It cleans obvious markers like em dashes.

• It does not break the deeper statistical patterns.

Detectors picked up on that every time in my tests.

Detector tests and comparison

I used two popular detectors:

• GPTZero

• ZeroGPT

For each tool, I:

- generated a base text with ChatGPT

- ran it through GPTinf

- pasted the “humanized” text into both detectors

Result for GPTinf:

• GPTZero: flagged as 100% AI

• ZeroGPT: flagged as 100% AI

This held across different prompt types and modes.

When I tried the same routine with Clever AI Humanizer, things shifted. Here is the link to the full breakdown of that and other tools:

Clever AI Humanizer scored better in my detector runs and did not ask for payment, which matters if you plan to test a lot.

Pricing, limits, and the Gmail shuffle

This is where GPTinf lost me faster than I expected.

Free usage:

• Without an account, you get 120 words per run.

• With an account, that doubles to 240 words.

If you want to test longer content, you hit a wall fast. I ended up splitting inputs and outputs into chunks, which wrecks the flow and adds work.

I tried making extra accounts to push the limits, but that means juggling multiple Gmail logins. After the third one, it gets annoying and not worth it for casual experiments.

Paid plans at the time I checked:

• Lite: $3.99 per month (billed annually) for 5,000 words

• Unlimited: $23.99 per month for unlimited words

Pricing is not crazy compared to similar tools, but given the detector results I saw, the value felt weak. If you only want cleaner text, maybe it makes sense, but not if your main goal is detection evasion.

Privacy and data control

The privacy side raised more questions than answers for me.

From reading their policy:

• They grant themselves broad rights over submitted content.

• There is no clear statement about how long texts stay on their servers.

If you deal with client work, internal docs, or anything sensitive, this becomes important.

One detail some people care about. GPTinf is operated by a sole proprietor in Ukraine. If you work under specific compliance rules or need data inside certain jurisdictions, you should factor that in before pasting anything important into the tool.

How it felt to use in real workflows

When I stopped running “lab” tests and tried GPTinf in actual scenarios, it fell behind.

Use cases I tried:

• turning a rough AI draft into something more human sounding

• rewriting a section for a blog-style piece

• hiding obvious AI fingerprints for platforms that dislike AI-looking text

GPTinf outputs looked slightly smoother than the original AI drafts, but detectors still screamed AI. On top of that, the short word limit slowed everything down unless I paid.

Clever AI Humanizer, on the other hand, gave:

• more natural rewrites in my side-by-side comparisons

• better scores in GPTZero and ZeroGPT for the same base inputs

• full access without running into a paywall during my tests

So when I had to pick a tool to stay in my workflow, I kept Clever AI Humanizer in the rotation and dropped GPTinf.

If your goal is cleaner phrasing only, GPTinf works. If your main concern is avoiding AI detection and you do not want to fight word limits or subscriptions on day one, my experience leaned heavily toward Clever AI Humanizer instead.

Short version from my side after a few months of messing with GPTinf on niche blogs and SaaS site copy:

- Detection tools

On my content, GPTinf did almost nothing for GPTZero, ZeroGPT, and Content at Scale’s checker.

Raw GPT-4 article: usually flagged 80–100 percent AI.

GPTinf “humanized” version: numbers moved maybe 5–10 percent, often not at all.

Sometimes it even scored worse on Content at Scale because the rewrite looked more generic.

So I agree with a lot of what @mikeappsreviewer said, but I would not say “every single time 100 percent AI.” I had a few cases where GPTZero dropped to “mixed,” but that did not hold across topics or longer posts.

- SEO impact

I tracked 12 posts over 4 months in Search Console.

Half used raw GPT-4 plus my own manual edits.

Half used GPT-4, then GPTinf, then light edits.

Numbers were almost the same:

• Impressions and clicks within 5–8 percent range

• No clear pattern of better or worse indexing

• No deindexing or manual actions

Google cares more about:

• factual accuracy

• depth and unique info

• internal links and structure

• user metrics like time on page and pogo sticking

GPTinf does not fix those. It mostly rephrases.

What did hurt SEO on two posts was that GPTinf flattened the tone and removed niche terms. Bounce rate went up a bit because the article felt generic for that audience. So if you use it, keep your industry jargon and specific examples.

- Long term workflow notes

Issues I hit over time:

• Word limits slowed full article work. Chunking 2k word posts into 240 word bits broke flow and created style shifts.

• The voice stayed “AI-ish.” Same safe tone, predictable sentence length, no strong opinions.

• For money pages, I ended up doing more manual edits after GPTinf, so it saved little time.

Where it helped a bit:

• Quick cleanup of short FAQ answers.

• Removing some repetitive phrases and softening robotic intros.

- About detection evasion as a goal

This part matters.

Relying on tools like GPTinf only to “beat detectors” is risky. Detectors are noisy. Google has said they focus on value and spam signals, not AI detection alone. If your content is thin, no humanizer will fix that.

What worked better for me:

• Write outlines yourself.

• Add personal or brand opinions, numbers from your own analytics, or original examples.

• Vary sentence length and structure by hand.

• Edit sections so they sound like how you talk, not like a help article.

- Clever AI Humanizer

Since you asked about alternatives, I tried Clever AI Humanizer on the same 12 posts. It tended to:

• score lower on GPTZero and ZeroGPT

• preserve more of my tone when I fed it a sample of my past writing first

It still needed a human pass, but for “SEO friendly” AI content it fit better in my workflow than GPTinf because I spent less time fixing robotic phrasing.

Practical takeaway if you care about SEO and detection:

• Do not rely on GPTinf as your main shield.

• Use any humanizer, GPTinf or Clever AI Humanizer, only as a light polishing step.

• Put most effort into human edits, real expertise, and structure.

• For long term blog use, test on 3–5 posts and watch Search Console and user behavior rather than detector screenshots.

Short version: GPTinf is fine as a light rephraser, but if your goals are “beat AI detectors” and “protect SEO,” it is pretty close to a wash long term.

A few angles that @mikeappsreviewer and @stellacadente did not hammer on:

- Detector reality check

The whole “99% success rate” thing is marketing fantasy. Detectors themselves disagree with each other and with humans. I ran a similar setup to what you are doing, plus Originality.ai and Copyleaks. GPTinf moved scores randomly. Sometimes a tiny improvement, sometimes worse, often no change.

The part people miss: if your site gets hit, it is almost never because a detector guessed “AI.” It is because the content looks programmatic, templated or thin at scale. GPTinf does not change that footprint. The IPs, the publishing cadence, the interlinking pattern and the lack of real signals like branded searches matter more.

- SEO impact in real rankings

On my affiliate and SaaS projects, GPTinf did not tank anything, but it did not help rankings either. What it did do a few times was:

- Remove specific phrases people actually search for

- Smooth out my strong opinions that made posts stand out

- Normalize headings into bland, generic stuff

So technically the posts were not “worse,” but they became less targeted and less memorable. That is the subtle SEO hit nobody markets: genericized content that blends into page 3.

I slightly disagree with the idea that “it is harmless if you just want cleaner phrasing.” If you care about search intent and topical authority, tools like GPTinf can quietly strip out the weird, specific angles that help you win certain keywords.

- Workflow problem you will feel in 3 months

First week it feels helpful. After a while:

- You start trusting it and editing less

- Your entire site voice converges on the same mild, safe tone

- Returning readers notice every article sounds identical

If you are building a brand, that is a real issue. Detectors do not care about voice, but your readers and linkers do.

- Better use of your time

Instead of spending time running everything through GPTinf to dodge detectors, I would:

- Keep using GPT for drafts

- Add your own anecdotes, internal data, screenshots, quotes from actual conversations

- Rewrite intros and conclusions by hand

- Let AI handle the boring middle sections only

That combo has had more ranking impact for me than any “humanizer.” It also makes detector scores more “mixed” naturally because you inject stuff AI will not guess.

- On tools, since you asked

If you still want a humanizer in the stack, something like Clever AI Humanizer is closer to what these tools should be doing: preserving tone and breaking some of the obvious LLM patterns without nuking your phrasing. I would still treat it as polish, not armor.

Practical takeaway for your blogs and web copy:

- GPTinf is unlikely to save you from detectors in any reliable way

- It will not directly kill your SEO, but it can sand off the edges that help you rank

- Use it, if at all, on short snippets or FAQs

- For long term blog content, invest in unique angles and heavier manual edits, not one more “99% undetectable” filter

If you want to test it properly: run 3 to 5 posts with and without GPTinf, same topic cluster, then watch Search Console and CTR over 2 to 3 months. Ignore detector screenshots. Those are mostly a distraction.

Short version after reading what @stellacadente, @viaggiatoresolare and @mikeappsreviewer reported and doing similar tests on content sites:

1. GPTinf and detection / SEO

I would stop thinking of GPTinf as an “anti detector” tool at all. In my runs it behaved like a stylistic filter:

- Rephrased, removed some quirks

- Left the same underlying language patterns that detectors look for

- Occasionally made content less aligned with search intent by deleting long tail phrases

Where I slightly disagree with others is on the “harmless” part. It can quietly hurt SEO if you:

- Operate in a technical niche that depends on specific terminology

- Target long tail keywords that live inside those “ugly” phrases GPTinf likes to smooth out

Sometimes keeping clunky wording is exactly what makes you match user queries and win snippets.

2. What actually helps with “human looking” content

Instead of pushing everything through GPTinf, I would:

- Use your own internal data and real examples in at least 20 to 30 percent of each article

- Keep niche jargon and product specific language even if it reads slightly rough

- Hand write hooks, subheadings and calls to action so your posts do not all sound like the same neutral explainer

Detectors naturally get mixed signals when half the text is truly original experience and phrasing.

3. Clever AI Humanizer in practice

Since you mentioned alternatives and others already brought it up, here is how Clever AI Humanizer behaved differently for me:

Pros

- Preserved “voice” better when I fed a sample of my old posts

- Tweaked structure more aggressively which sometimes lowered detector flags

- Played nicer with SEO because it did not automatically strip out long tail phrasing as much

- Free access during testing made it practical to run whole articles

Cons

- Still not a magic shield against any serious detector

- Can occasionally over shuffle sentences and slightly change meaning if you do not review

- If you rely on it heavily, your site voice can still converge toward a mid level, polished but bland style

- Needs a final human pass to restore strong opinions or brand personality

I think @stellacadente is right that Google is not out there banning pages just for “AI percentage” scores, and @viaggiatoresolare is right that the real risk is generic content at scale. Where I part ways slightly with @mikeappsreviewer is that I do see a limited use case for these tools, including GPTinf, on:

- Short FAQs

- Meta descriptions

- Small content blocks where heavy originality is less critical

For full blog posts and money pages, I would invert your current workflow:

- Draft with GPT

- Edit manually for expertise and specificity

- Optionally run tricky paragraphs through Clever AI Humanizer for readability only

- Final human pass focused on search intent and unique angles

If you want to know whether GPTinf is hurting or helping your site, the only test that matters:

- Take a small cluster, say 4 to 6 posts

- Half with your current GPTinf process

- Half with manual plus maybe Clever AI Humanizer and no GPTinf

- Watch Search Console for 2 to 3 months for impressions, clicks, queries and average position

Detector screenshots will not tell you what Google or your users actually care about.